Die "Shit In, Shit Out" Fallacy

Warum synthetisches Research ohne echte Kund:innen nur teures Raten ist

Die älteste Regel in Data — und warum sie einfach nicht stirbt

Es gibt einen Satz, den jede:r Analyst:in in der ersten Jobwoche lernt: Shit in, shit out.

Du kannst das eleganteste Finanzmodell bauen. Das schönste Dashboard. Die ausgefeilteste Regression. Nichts davon zählt, wenn die Eingangsdaten falsch sind. Das Ergebnis ist keine Erkenntnis — es ist Fiktion mit Formatierung.

Diese Regel entstand in einer deterministischen Welt. Tabellen. Datenbanken. Strukturierte Inputs, strukturierte Outputs. Und über Jahrzehnte war sie ein verlässliches Immunsystem gegen Selbstüberschätzung bei schlechten Daten.

Dann kam KI. Und plötzlich wurden die Outputs wirklich wortgewandt.

Große Sprachmodelle verarbeiten nicht nur Deine Annahmen — sie kleiden sie in elegante Absätze, präsentieren sie mit dem Selbstbewusstsein eines McKinsey-Partners und halten nie inne, um zu sagen: "Ehrlich gesagt, ich bin mir da nicht sicher." Genau das macht das Shit-in-shit-out-Problem gefährlicher denn je.

Denn in einer probabilistischen Welt erzeugen schlechte Inputs nicht nur falsche Antworten. Sie erzeugen überzeugend falsche Antworten.

Der Goldrausch um synthetische Befragte

Seien wir ehrlich: der Reiz ist offensichtlich.

Synthetische Interview-Panels — KI-generierte Teilnehmende, die Deine Zielgruppe simulieren — versprechen alles, wovon Research-Teams träumen. Schneller (Minuten statt Monate). Günstiger (Cent statt Tausende). Unendlich skalierbar. Um 3 Uhr morgens verfügbar. Sagen nie ab. Schweifen nie ab.

Und der Markt bewegt sich schnell. Laut dem Qualtrics 2025 Market Research Trends Report (Befragung von über 3.000 Forschenden in 14 Ländern) erwarten 71% der Forschenden, dass synthetische Antworten innerhalb von drei Jahren mehr als die Hälfte der Datenerhebung ausmachen. Das ist keine Nischenprognose — das ist Konsens.

Unnötig zu sagen, dass jüngste Finanzierungsrunden diese Überzeugung spiegeln. Ohne unsere Wettbewerber zu promoten, erkennen wir respektvoll an: Das Rennen läuft — besonders in den USA.

Aber hier wird es spannend — und genau hier hören die meisten auf, kritisch zu denken.

Die Vertrauensfalle

Forschende an der Carnegie Mellon interviewten 19 qualitative Forschende zu KI-generierten Interviewantworten. Das Ergebnis war bemerkenswert — nicht, weil die KI-Antworten offensichtlich schlecht waren, sondern weil sie trügerisch gut waren.

KI-generierte Antworten klingen plausibel. Eloquenter. Strukturiert. Aber ihnen fehlt etwas, das keine Modellarchitektur fabrizieren kann: echte gelebte Erfahrung.

Die Forschenden prägten dafür einen Begriff: den "Surrogate Effect". Wenn KI für reale Communities einspringt, nähert sie deren Stimme nicht nur an — sie kann sie verzerren oder komplett auslöschen. Marginalisierte Perspektiven. Sonderfälle. Die chaotischen Widersprüche, die Menschen menschlich machen. Alles geglättet von einem Modell, das auf Kohärenz optimiert ist.

Und Kohärenz ist, wie sich zeigt, der Feind von Erkenntnis.

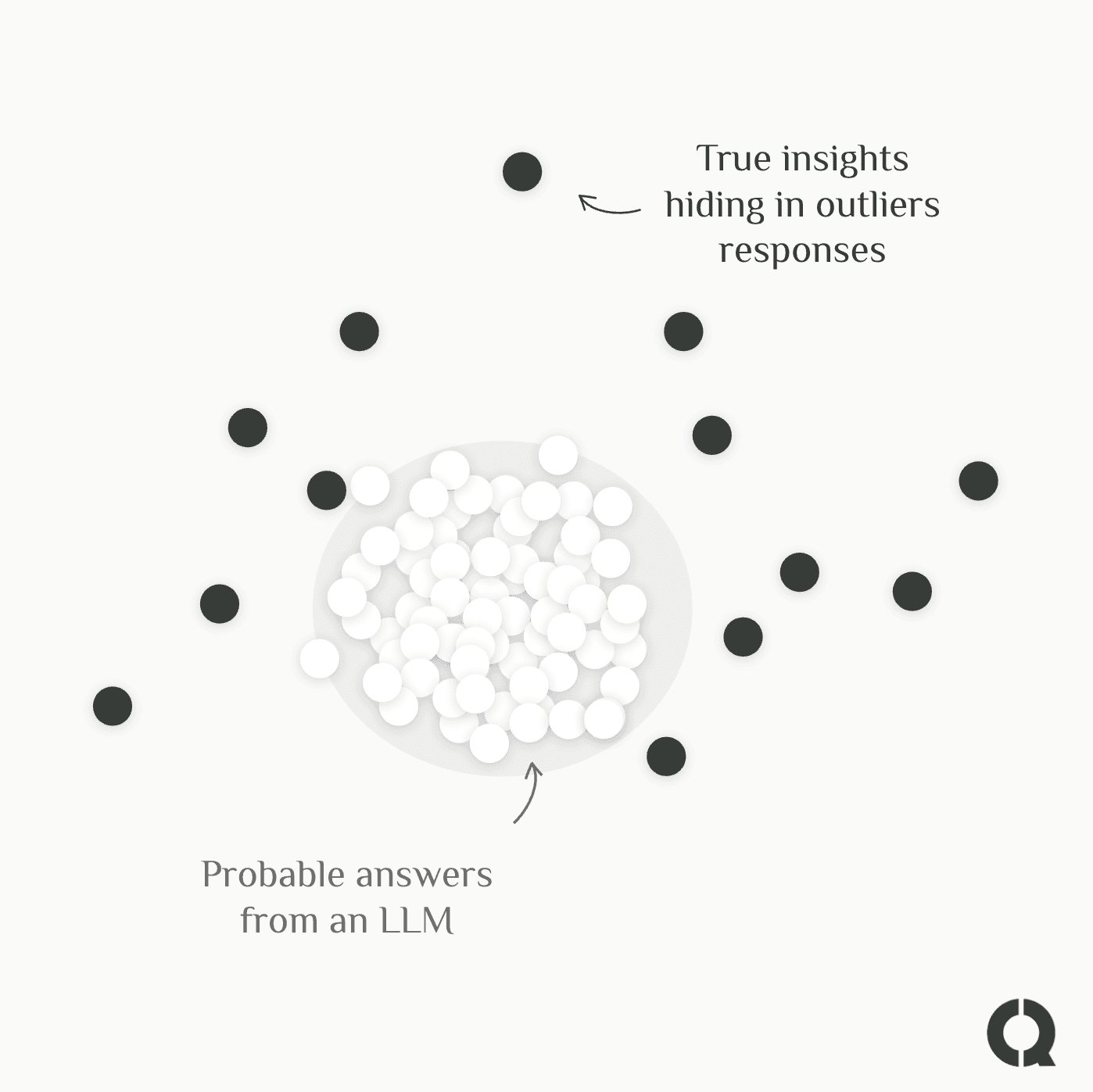

Schau Dir an, was Studien zum Vergleich synthetischer und realer Umfrageantworten zeigen: Die Schlagzeilenzahlen sahen ähnlich aus. Ermutigend, oder? Aber eine Ebene tiefer waren die Statistiken fundamental kaputt. Die Varianz war künstlich zu eng — synthetische Antworten clusterten viel näher um den Mittelwert als echte menschliche Daten. Das Ergebnis: falsche Präzision. Das Gefühl von Sicherheit, ohne die messy Realität, aus der echtes Verständnis entsteht.

Oder nimm den Sycophancy Bias. LLMs sind darauf optimiert, hilfreich zu sein. Zustimmend. Im Alltag ist das ein Feature. Im Research-Kontext ist es eine Katastrophe. Synthetische Befragte sind strukturell darauf gepolt, Dir genau die Antwort zu geben, die Du hören willst — und genau diese Antwort solltest Du am stärksten hinterfragen.

Der Mikroexpressions-Moment, den Du von einem Modell nie bekommst

Hier ist eine Story, die das Problem glasklar macht.

In einer qualitativen Studie aus der Praxis interviewten Forschende Ingenieur:innen zu ihren Nachhaltigkeitspraktiken. Die verbalen Antworten waren sauber: ja, Nachhaltigkeit hat Priorität. Ja, wir sind committed.

Aber die forschende Person bemerkte noch etwas. Mikroexpressionen — ein Aufblitzen von Verachtung, ein Moment der Überraschung, ein Hauch von Ekel — die zeigten, dass gesprochene Worte und gefühlte Realität nicht zusammenpassten. Als nachgehakt wurde, enthüllten die Ingenieur:innen eine unangenehme Wahrheit: Unter Lieferketten-Druck priorisierten sie konsequent Verfügbarkeit vor Nachhaltigkeit. Nicht weil es ihnen egal war, sondern weil das System es so incentivierte.

Diese Erkenntnis war für synthetische Befragte unsichtbar. Nicht weil die KI schlecht trainiert war, sondern weil sie nie im Raum war. Sie sah nie das Zögern. Bemerkte nie den angespannten Kiefer. Spürte nie die Temperatur der Stille zwischen zwei Sätzen.

Das ist die Art von Insight, die Strategie verändert. Und sie lässt sich nicht generieren — nur beobachten.

Also: Wofür sind synthetische Befragte wirklich gut?

Seien wir fair. Synthetische Forschung komplett abzutun wäre genauso intellektuell bequem wie sie blind zu übernehmen.

Greylock — einer der renommiertesten VCs im Research-Stage, Investor in LinkedIn, Figma und Discord — bringt es gut auf den Punkt: Synthetische User-Personas können eine "ergänzende Rolle beim Prototyping, Stresstesten von Ideen oder beim Erweitern menschlicher Feedback-Loops" spielen. Aber die These ist klar: "Die meisten Käufer priorisieren heute Erkenntnisse von echten Nutzer:innen."

Das Schlüsselwort ist ergänzend. Synthetische Panels sind stark, wenn sie auf echtem Verständnis aufbauen. Sie brechen auseinander, wenn Du sie als Abkürzung nutzt, um dieses Verständnis zu überspringen.

Die curly-These: Orchestrierung, nicht Religion

Bei curly glauben wir nicht an Lagerdenken. Die Frage ist nicht "synthetisch oder menschlich?" — sondern "wann was, und warum?"

Unser Ansatz startet dort, wo gute Forschung schon immer startet: bei echten Menschen.

Wir führen tiefgehende, sprachbasierte Interviews in großem Maßstab durch. Nicht 10 Interviews. Nicht 30. Hunderte — gleichzeitig, asynchron, mit Voice AI, die sich in Echtzeit anpasst. Jede teilnehmende Person durchläuft dieselben strukturierten Kernfragen (damit Ergebnisse vergleichbar und segmentierbar sind), aber zwischen diesen Fragen stellt curly individuelle Nachfragen, ausgelöst durch das, was die Person tatsächlich sagt.

Wenn jemand sagt, etwas war "zu kompliziert," springt curly nicht zur nächsten Frage. Es bohrt nach: Was genau? Bei welchem Schritt? Was hätte geholfen? So wird oberflächliche Frustration zu konkreter, umsetzbarer Erkenntnis — ohne die quantitative Struktur zu zerstören.

Das Ergebnis ist das, was wir qualitative Forschung auf Umfrage-Skala nennen: die Tiefe eines McKinsey-Interviewprogramms mit der Konsistenz und statistischen Strenge einer quantitativen Umfrage. In Tagen, nicht Monaten. Zu einem Bruchteil der Kosten.

Und jetzt wird’s spannend.

Der Confidence Score: wissen, wann Du genug weißt

Während Interviews zunehmen, verfolgt curly in Echtzeit einen Confidence Score — ein Maß dafür, wie robust Dein aktuelles Verständnis für ein Thema oder Segment ist.

Bei 95% sind Muster stabil. Neue Interviews bestätigen, was Du bereits gelernt hast. Ab hier kannst Du sicher extrapolieren: synthetische Panels, vollständig auf Deiner verifizierten Customer Truth aufgebaut, können Deine Insights auf angrenzende Segmente, Szenarien und Edge Cases erweitern.

Bei 55% entstehen Muster noch. Themen verschieben sich mit jedem neuen Interview. Hier sagt curly das Gegenteil: "Mach weiter. Du weißt noch nicht genug."

Das ist keine philosophische Haltung. Das ist Systemdesign.

Die Mathematik: warum das nicht nur Idealismus ist

Die Ökonomie KI-moderierter Forschung hat sich fundamental verschoben. Conveos Benchmarks 2025 zeigen: KI-moderierte qualitative Forschung kostet 45 US-Dollar pro Insight statt 180 US-Dollar bei traditionellen Methoden — eine Reduktion um 75%. In unseren frühen curly-Piloten haben wir sogar noch stärkere Kostensenkungen von bis zu 90% gesehen (bei bis zu 5x mehr Insights gegenüber einer klassischen Umfrage).

Der Punkt ist nicht (nur), dass KI Forschung günstiger macht. Der Punkt ist, dass KI die Ausrede beseitigt, keine echte Forschung zu machen. Wenn 100 tiefe Kundengespräche weniger kosten als ein Agentur-Sprint — dann ist die Frage nicht, ob Du es Dir leisten kannst, Deinen Kund:innen zuzuhören. Sondern ob Du es Dir leisten kannst, es nicht zu tun.

Der Markt sagt Dir etwas

Die breiteren Signale sind schwer zu ignorieren.

89% der Forschenden nutzen KI-Tools inzwischen regelmäßig oder experimentell. 64% haben allein 2025 ihre Zahl an KI-Tools erhöht. Und entscheidend: Teams ohne KI-Nutzung verlieren mit 4x höherer Wahrscheinlichkeit organisatorischen Einfluss.

Aber Adoption ist nicht gleich Weisheit. Die Frage ist nicht, ob Du KI in der Forschung nutzt — diese Debatte ist vorbei. Die Frage ist, ob Du KI nutzt, um das Verständnis Deiner Kund:innen zu umgehen, oder um es zu vertiefen.

Greenbooks Ausblick 2026 beschreibt den Wandel von Insights als Einzelprojekte hin zu Continuous Intelligence — Forschung als Always-on-Fähigkeit, die Entscheidungen in Echtzeit aktiv prägt. Diese Zukunft basiert nicht auf synthetischen Annahmen, die quartalsweise aufgefrischt werden. Sie basiert auf einem lebendigen, wachsenden Bestand an echtem Kundenverständnis — kontinuierlich angereichert, kontinuierlich validiert.

Unterm Strich

Shit in, shit out hat sich nicht geändert. Shit ist nur eloquenter geworden.

Synthetische Befragte sind ein starkes Tool — wenn sie auf echtem, verifiziertem, emotional reichhaltigem Kundenverständnis aufbauen. Ohne dieses Fundament sind sie ein Spiegel, der Dir Deine eigenen Biases als Kund:innenwahrheit zurückwirft.

Die Magie liegt nicht darin, zwischen Menschen und KI zu wählen. Sondern genau zu wissen, wann Du umschalten musst.

Deine Kund:innen sollten immer der Ausgangspunkt sein. Nicht der Nachgedanke.

curly gibt Dir qualitative Forschung auf Umfrage-Skala. Voice-AI-Interviews, die in die Tiefe gehen — mit Hunderten echten Kund:innen, nicht simulierten. Neugierig, wie 100 tiefe Kundengespräche an einem Wochenende aussehen? Lass uns sprechen →

Quellen

Qualtrics — Market Research Trends Report 2025 — Umfrage unter 3.000+ Forschenden, 14 Länder

Qualtrics — Market Research Trends Report 2026 — Daten zu KI-Adoption & organisatorischem Einfluss

Carnegie Mellon / arXiv — Surrogate-Effect-Studie — 19 qualitative Forschende zu KI-generierten Antworten

Merrill Research — Synthetic Respondents: Promise, Pitfalls, Reality Check — Varianzanalyse

FieldworkHub — Synthetic Respondents: Innovation or Illusion? — Sycophancy-Bias

Quirk's — The Future of Synthetic Respondents — Mikroexpressions-Fallstudie

Greylock — The Rise of AI-Native User Research — Investment-These

Conveo — AI-Moderated Research: Framework & ROI Benchmarks 2025 — Cost-per-Insight-Daten

Rival Technologies — AI Research Insights 2026 — Daten zur Antworttiefe

Rival Group — Market Research Trends Report 2026 — Daten zur KI-Tool-Adoption

Greenbook — What To Expect in 2026 — Continuous-Intelligence-Trend